A lot of companies are selling “AI” to law enforcement. What they’re really selling is a surveillance pipeline: a cloud network that quietly turns everyday movements into searchable history.

They call it “public safety video analytics”.

In practice, it’s often mass tracking, run by vendors that have repeatedly shown they can’t - or won’t - protect civil liberties, data privacy, or even adhere to the basic standards expected of police governance.

This isn’t a hypothetical risk. It’s already happening.

When technology outruns governance, people lose rights

Automatic License Plate Recognition (ALPR) has clear benefits, such as its proven ability to find a missing child or track a stolen vehicle. However, the same system, designed for maximum data collection and easy sharing, can turn into a persistent, scalable tracking tool for ordinary people not suspected of any crime.

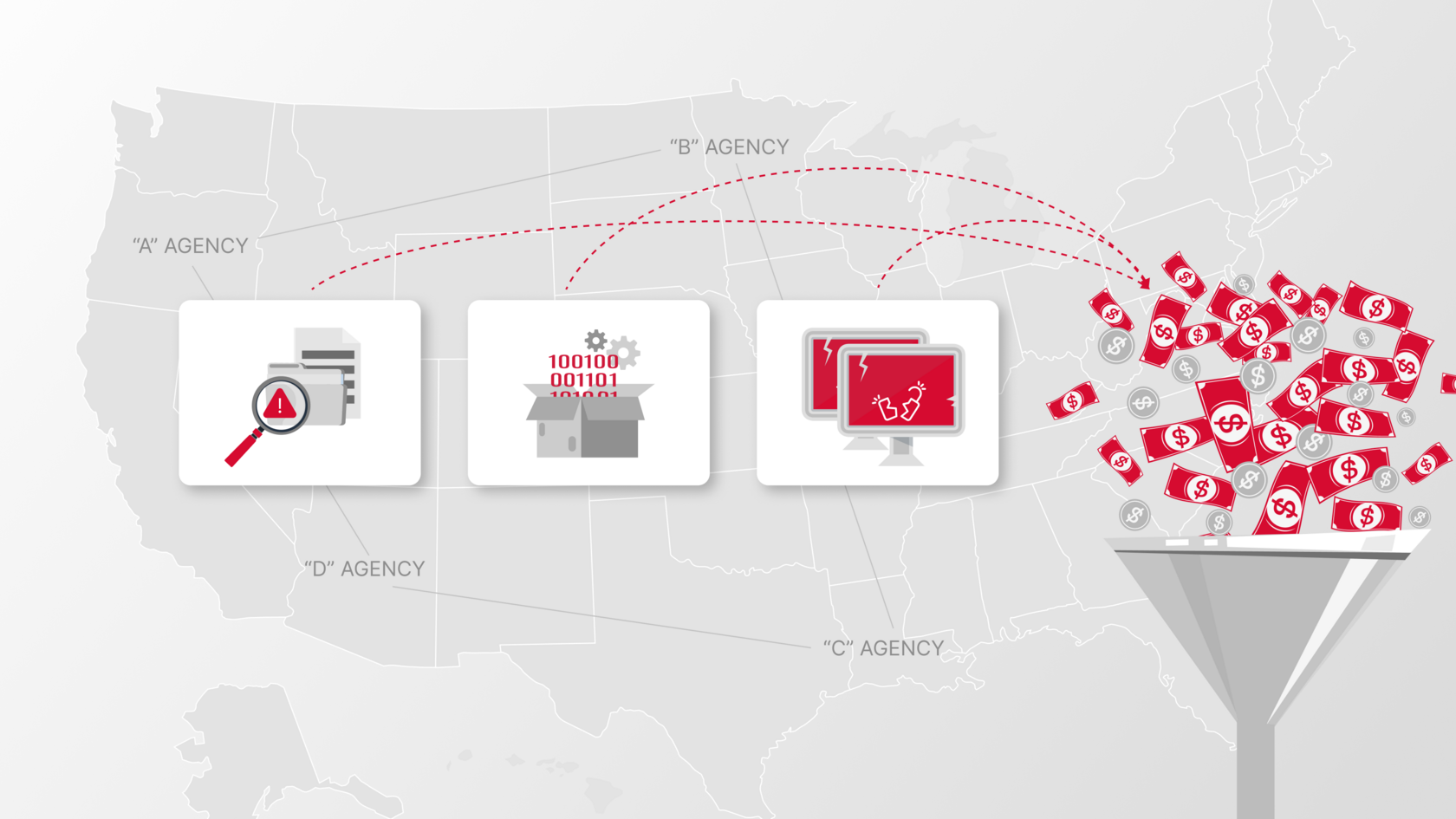

A major US ALPR provider built a vast data network used by thousands of agencies. The result wasn’t just “efficient policing”. The system effectively became a nationwide location database that made it easy to follow drivers again and again, building movement histories over weeks and months without a meaningful purpose.

The technology did what it was designed to do. But the governance failed, and the public paid the price.

What actually went wrong, and why it keeps happening

These failures aren’t isolated incidents. They’re structural. The business model rewards collection, storing and sharing of data, because that’s what makes the product powerful. Yet, this very power is also what makes it dangerous.

Here’s what repeatedly shows up when these systems are investigated.

“Sharing” becomes a loophole for bypassing the law

Once the license plate data moves into a vendor's cloud system, it escapes local laws. Suddenly, this data becomes accessible to police or federal agencies in other locations without the safeguards voters and legislators thought were in place. This data-sharing is a loophole that allows agencies legally bypass restrictions on certain police investigations.

No real accountability: “Search first, justify later”

If logs are weak, audits are rare, and searches aren’t tied to real case documentation, abuse is bound to happen. That’s how surveillance tools get used for personal vendettas, political targeting, or tracking people with no legitimate law-enforcement purposes. If no one has to justify their search, people will abuse the system.

Security that isn't fit for purpose

The sheer volume of sensitive location and video data collected by companies that use AI-driven video analytics demands for the top security, yet researchers and investigations have repeatedly uncovered major security flaws, including weak devices, exposed interfaces, and unprotected feeds. When the system is insecure, it’s not just “bad IT”. It’s a public risk.

History of allegations, lawsuits and public backlash

Civil liberties groups and journalists have repeatedly voiced concerns about mass surveillance, unconstitutional tracking, biased use and unauthorized data sharing. The underlying issue is not just a couple of bad employees. It’s a system that normalized abuse and made it difficult to detect.

If a vendor can’t meet basic standards for privacy, security and accountability, they should not be collecting data on the public.

And yet, these vendors keep winning contract after contract.

That’s the most outrageous part: “AI video analytics for public safety” companies accused of violating laws and rights still get rewarded with public money, because they market their systems using the fashionable term "AI" and the promise of "safety".

This is the moral conflict that cities can’t ignore. Law enforcement agencies exist to uphold the law. But you can’t enforce the law with one hand while outsourcing law-breaking with the other. So how can an agency justify partnering with a company accused of building tools that enable illegal surveillance, data misuse or rights violations?

The actual requirements for ethical AI for public safety

Any city using ALPR or video intelligence platforms needs to adopt non-negotiable standards. Not “trust us”. Not “we take privacy seriously”. It needs hard enforceable requirements that change behavior.

Baseline technical standards

- End-to-end encryption and multi-factor authentication

- Strict role-based access controls and segmentation

- Data minimization (collect less, retain less) and on-device processing where possible

- Tamper-resistant logs and mandatory audit trails

Baseline governance standards

- Clear purpose limits (what it can be used for, and what it can not)

- Strict technical and contractual limits on sharing, including cross-jurisdiction and federal access

- Independent audits and public reporting

- A real accountability mechanism: every sensitive search must be tied to a documented case and made available for later review.

- Agencies must be able to export and verify logs for every query and data sharing action.

The key point is that justification must be an enforced requirement built into the system.

If users know they must link a case ID to their actions, and those actions will be scrutinized, it will be a powerful deterrent against system misuse.

Where IREX fits

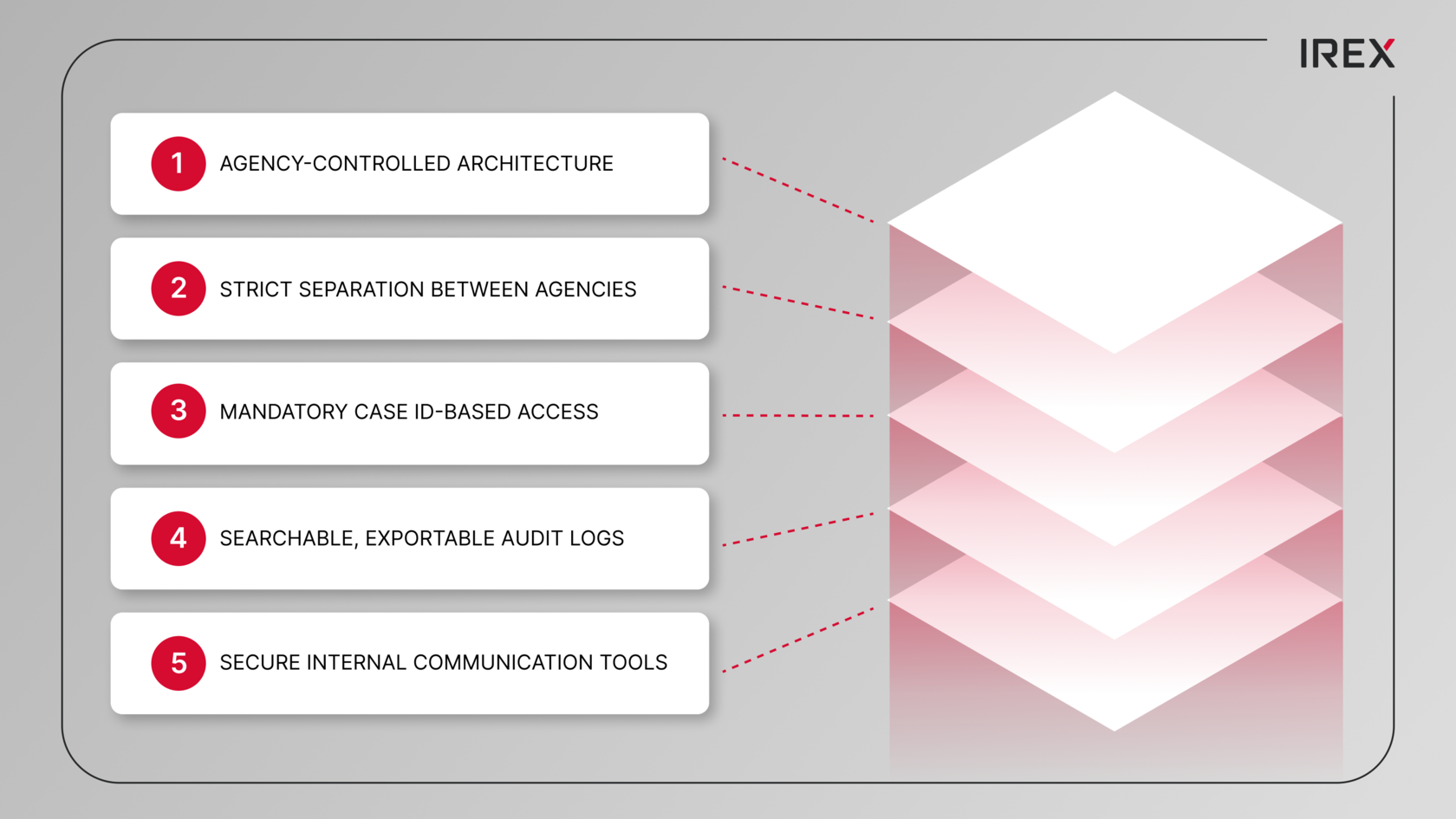

IREX was built specifically to prevent the failures we keep seeing within surveillance vendor networks. Instead of relying on marketing rhetoric or issuing privacy statements, it’s designed with technical safeguards to proactively deter abuse and ensure accountability.

What it looks like in practice:

- Agency-controlled architecture ensures the customer retains control over data sovereignty, retention, and compliance, preventing vendor cloud services from creating legal or technical loopholes.

- Strict separation between agencies prevents casual data access by other departments.

- Mandatory case-based access ensures that sensitive actions can’t be executed without corresponding documentation.

- Searchable, exportable audit logs make oversights to be effective and verifiable.

- Secure internal communication tools prevent data leakage through consumer apps and shadow channels.

The lesson from repeated ALPR scandals is simple: convenience without governance becomes a civil liberties disaster.

It is time to end the public financing of systems that violate rights.

If you’re a city official, procurement officer, or public safety leader:

- Stop buying “AI surveillance” systems that can’t prove rigorous security and auditability.

- Mandate case-specific logging, independent audits and strict sharing controls.

- Make all system usage policies and transparency reports publicly available.

- Terminate contracts when vendors can’t uphold baseline protections.

If you’re a resident:

- Ask your city who has access to your location data, how long it’s kept, and who it’s shared with.

- Demand the audit logs. Demand the policies. Demand the proof.

- Tell your representatives that public safety doesn’t require mass tracking and doesn’t justify funding companies that profit from violating fundamental rights.

The bottom line is:

A surveillance system that can be abused - will be abused.

And a government that repeatedly finances such a system is actively endorsing that result.

So here is a checklist for your next council meeting

Start with this question: “Show us a plain-language, written proof of all technical and contractual safeguards that prevent abuse and unlawful data sharing”.

Transparency

- Full contract and all amendments, with no “confidential” carveouts for privacy/security terms

- Vendor data policy: what’s collected, where it’s stored, how long it’s kept, who can access it

- A public-facing use policy: what the system is allowed to do, and explicitly what it’s not allowed to do

Hard limits on collection and storage

- Short default retention, longer only with documented, case-specific needs

- Data minimization: collect only what’s necessary, not “everything because we can”

- A ban on building “movement histories” unless tied to a documented case and legal standard

Close data-sharing loopholes

- No default cross‑jurisdiction sharing; opt‑in only with written justification

- No federal access unless explicitly approved, logged and publicly disclosed

- Clear prohibitions on using the system to bypass state/local restrictions through third-party access

Real accountability

- Mandatory case ID for every sensitive search: no case ID - no search

- Detailed logs: who searched, what, why, and how results were used

- Independent audits at least annually and after major changes or incidents

- Clear penalties for misuse: discipline policies, access revocation and referral pathways

Verifiable security instead of blind trust

- End-to-end encryption and MFA for every user

- Role-based access controls and strict separation between agencies

- Regular third-party security testing with publically available summaries

- Breach notifications with strict deadlines and public disclosure

Public oversight and reporting

- Quarterly transparency reports: number of searches, case-linked searches, shares, out-of-policy incidents

- Civilian oversight to review audits and compliance

- Clear complaint and escalation process outside internal affairs

Procurement red lines

- City can export and validate all logs at any time

- Termination clause for repeated misuse, security failures or unlawful sharing

- “No dark patterns”: no default sharing, hidden data partnerships or secondary monetization.

And always remember:

If every sensitive search can’t be tied to a documented case and independently audited, this tool is not public safety - it’s mass surveillance.

If the vendor won’t agree to strict sharing limits and verified audit logs, you should assume misuse is a feature, not a bug.