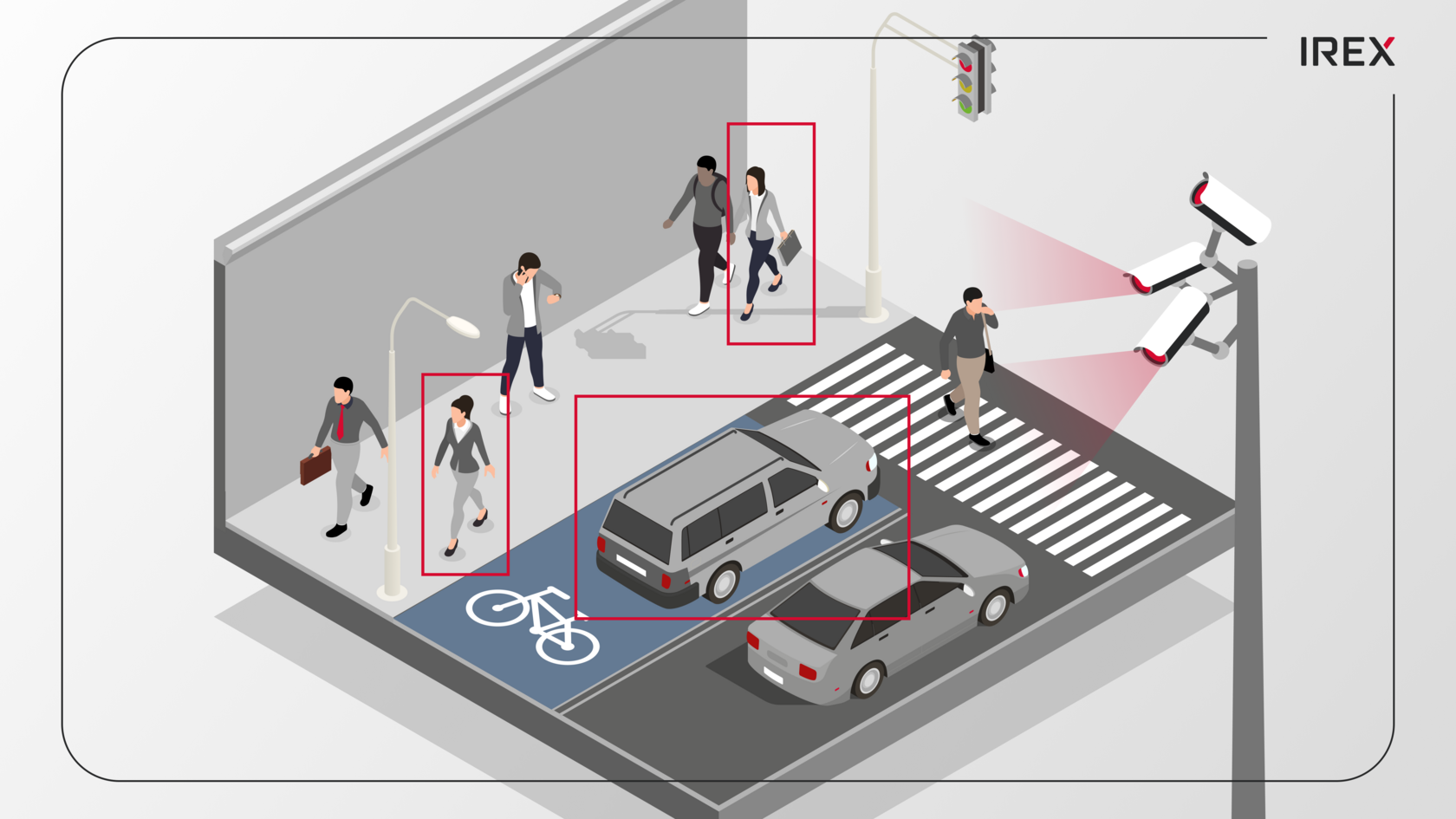

The rise of AI-powered public safety video analytics presents an existential challenge to information security and privacy. Tools designed for public safety, such as those aiding in finding a missing child, can be easily misused to create a perpetual surveillance network over ordinary citizens.

Ongoing lawsuits and investigations involving a prominent US-based Automatic License Plate Recognition (ALPR) provider demonstrate how uncontrolled use of this technology has compromised civil liberties through data misuse. The company established a massive data network for thousands of agencies, transforming a public safety tool into an instrument for widespread warrantless tracking, privacy invasion, and data-sharing violations.

“The company has recently been feeling the heat after the revelation that data from its national license plate scanner network was (and likely still is being) shared with agencies including ICE. Every community in the nation that is home to their cameras should look at the user agreement between their police department and the company, to see whether it contains a clause stating that the customer “hereby grants the vendor a worldwide, perpetual, royalty-free right and license” to “disclose the Agency Data… for investigative purposes”.

- American Civil Liberties Union (ACLU)

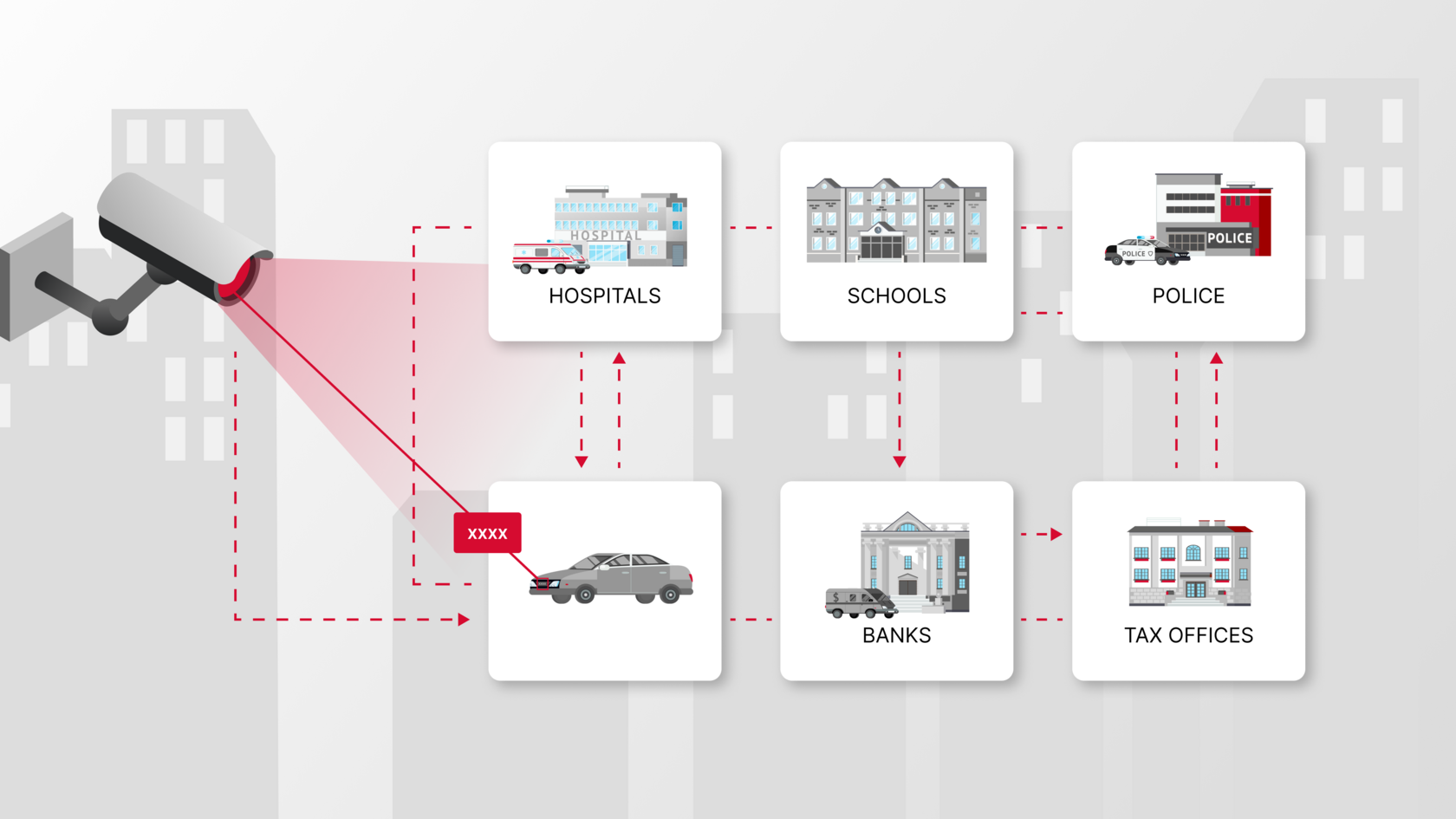

Investigations showed that agencies used this ALPR network to create detailed movement histories, following individual drivers hundreds of times over months with little to no judicial oversight. Data was shared with out-of-state and federal agencies, thus bypassing state-level restrictions, including for sensitive reproductive-health investigations. While the technology functioned as designed, the governance didn’t. Combination of poor access control, inadequate audits, and the absence of strict data movement boundaries allowed this mass location tracking system to thrive.

What went wrong with the ALPR operation

Several recurring problems emerged:

- Overly broad data sharing: Local restrictions were easily evaded once license plate data entered the vendor’s cloud, enabling cross-jurisdictional and federal access.

- Lack of accountability and audits: Logging was insufficient and not designed to tie sensitive searches to specific Case IDs, making it difficult to distinguish legitimate work from abusive misuse (e.g., tracking ex-partners or political opponents). The model encouraged "search first, justify later".

- Inadequate security: Technical security for devices and portals was poor, with documented vulnerabilities (physical access exploits, network exposure, unencrypted data, outdated software). Misconfigured web interfaces even exposed video feeds of children on playgrounds.

Key issues and allegations:

- Mass surveillance and unauthorized sharing: The company’s national ALPR network is criticized for enabling warrantless mass surveillance and illegally bypassing state sanctuary laws by sharing data collected by its AI-powered public safety video analytics with federal agencies like ICE and CBP.

- Discriminatory misuse: Allegations include agencies targeting specific ethnic groups with insulting searches and querying data for non-law enforcement purposes, such as abortion cases.

- Security vulnerabilities: An independent researcher documented 51 security issues in the vendor's hardware/software, including physical access and network flaws.

- Legal challenges: The vendor faces federal probes and lawsuits from groups like the EFF and ACLU for 4th Amendment violations and excessive tracking.

The resulting backlash led to over 30 localities terminating their contracts since early 2025.

"In the end, it was just clear that this wasn't going to be a technology that was going to be well received or that we could continue to use," Flagstaff Mayor Becky Daggett told NPR. The council voted to end the contract”.

- National Public Radio (NPR)

Baseline standards for AI-powered public safety video analytics systems

To protect civil liberties, a secure AI video analytics system must be built upon a foundation of robust technology and governance.

Technological expectations:

- End-to-end encryption and multifactor authentication

- Strict role-based access controls and network segmentation

- Data minimization and on-device processing

- Mandatory logging and tamper-proof auditing.

Ethical and governance expectations:

- Clear purpose limitation and transparency for data collection

- Strict sharing controls (technical and contractual limits on cross-jurisdiction/federal access)

- Accountability (independent audit, impact assessments, appeal mechanisms)

- Customers must have the ability to validate and download logs for every data query and share action.

When these standards are met, the system remains an effective public safety tool, but also establishes a framework of accountability. This protocol fundamentally alters behavior, as personnel know they must justify their actions.

IREX's comprehensive strategy to resolve these critical issues

IREX was explicitly built to prevent the failures we keep seeing in surveillance vendor networks. The system delivers verifiable results, not just standard marketing claims. Our video analytics platform is NIST-certified and meets high security standards. The main advantage is enabling agencies to maintain full control over their own data location, retention, legal compliance and data sovereignty, without relying on a vendor's cloud for governance.

Technological and ethical features of IREX:

- Secure private cloud architecture: Ensures agencies maintain full control, backed by consistent encryption and hardened infrastructure.

- Role-based, multi-tenant access control: Guarantees strict data separation; one agency can’t access another's data.

- Comprehensive logging, Case ID and audit: Mandatory Case ID for all sensitive actions and detailed, searchable logs to enable independent auditing and justification of every use.

- Integrated encrypted corporate messenger: A built-in messenger for secure operational communication and evidence sharing, preventing sensitive data from leaking through external, unsecure channels.

- Ethical AI for public safety: IREX is explicitly built to respect privacy and ethical norms of free societies, focusing strictly on public-safety needs instead of enabling general public tracking.

The failures of a major ALPR provider shows that convenience without governance leads to system misuse and privacy violations. By implementing mandatory Case IDs, transparent auditing, strict controls and a secure internal messenger, IREX puts in place clear boundaries to prevent the misuse seen in other AI-powered public safety video analytics.